Securing Kubernetes: Implementing Container Signature Verification with Cosign and Kyverno

In our last technical series blog, we explained how to leverage both Cosign and Kyverno to make it easier and faster to sign and check the container images that are used on Kubernetes clusters. That way, you can protect your infrastructure from both accidental errors and malicious attacks. This time, we’re fulfilling our promise to dive into the actual code we use to do it.

Verifying container image signatures is quickly becoming an essential part of a secure Kubernetes cluster. With container signature verification, you can be sure that only the images from trusted sources, like your organization’s CI/CD environment, are allowed to be deployed to your clusters – adding another hurdle for threat actors or insider threats. This includes not only the container images that your organization creates in-house, but also the many 3rd party Kubernetes components that are regularly deployed to a cluster. While there are a few different projects that support container image signature verification, Virtru’s SRE team chose Kyverno.

Kyverno is a powerful Kubernetes policy engine designed to generate, validate, and mutate Kubernetes resources based on user created policies. With its many features and ease of use, we deemed it to be the best fit out of the available options.

Using Kyverno, you can verify signatures from a variety of sources, including your own self-hosted public/private key pair and KMS keys from different cloud providers. In this example, we’ll be showing how we use a combination of Cosign, Terraform, Kyverno, and a GCP KMS key on a Google Kubernetes Engine (GKE) cluster to verify our container images.

Kyverno

After Kyverno is deployed to your cluster, perhaps using a Gitops tool like ArgoCD, you’ll need a Kyverno policy to verify that all Pods contain images signed with a specified key. To enable verification of signatures from other projects, the policy would look something like:

apiVersion: kyverno.io/v1

kind: ClusterPolicy

metadata:

name: external-secrets-policy

spec:

validationFailureAction: enforce

webhookTimeoutSeconds: 30

failurePolicy: Fail

background: true

rules:

- name: external-secrets-policy

match:

any:

- resources:

kinds:

- Pod

verifyImages:

- imageReferences:

- "ghcr.io/external-secrets/external-secrets:*"

attestors:

- entries:

- keyless:

subject: "https://github.com/external-secrets/external-secrets*"

issuer: "https://token.actions.githubusercontent.com"

apiVersion: kyverno.io/v1

kind: ClusterPolicy

metadata:

name: virtru-apps-policy

spec:

validationFailureAction: enforce

background: false

webhookTimeoutSeconds: 30

failurePolicy: Fail

rules:

- name: check-image

match:

resources:

kinds:

- Pod

verifyImages:

- image: "us-docker.pkg.dev/project123/apps/*"

key: "gcpkms://projects/project123/locations/us/keyRings/test_keyring/cryptoKeys/cosign_key/versions/1"

Workload Identity

The best way to give Kyverno access to GCP resources is using Workload Identity on your GKE clusters. Once Workload Identity is enabled on the cluster, you’ll need to bind the Kyverno service account on your Kubernetes cluster to a GCP service account with the proper roles.

module "kyverno-workload-identity" {

source = "terraform-google-modules/kubernetes-engine/google//modules/workload-identity"

use_existing_k8s_sa = true

name = kyverno

namespace = kyverno

project_id = "project123"

roles = [

"roles/cloudkms.viewer",

"roles/cloudkms.verifier",

]

}

The above Terraform module will create a GCP service with the name “kyverno” in the project123 project, add a role binding for the “roles/workloadIdentityUser” role, as well as additional “roles/cloudkms.viewer” and “roles/cloudkms.verifier” roles, to the Kyverno GCP service account, and finally creating a binding between the Kubernetes Kyverno service account and the GCP Kyverno service account. Your organization will need to decide the best way to scope the permissions of the GCP service account to give it the minimal permissions needed to interact with the GCP resources that it needs access to.

KMS code

If your organization would like to sign the container images that your developers are creating and deploying to your clusters, the KMS key needs to be in a specific format to be compatible with cosign. Using a built-in subcommand, we can use the cosign cli to create this key.

> cosign generate-key-pair --kms gcpkms://projects/project123/locations/global/keyRings/test_keyring/cryptoKeys/cosign_key

Using the gcloud cli tool we can determine the format of the key that cosign created.

> gcloud kms keys describe projects/project123/locations/global/keyRings/test_keyring/cryptoKeys/cosign_key

/cryptoKeys/cosigntest

createTime: '2022-01-06T01:47:10.360168836Z'

destroyScheduledDuration: 86400s

name: projects/project123/locations/global/keyRings/test_keyring/cryptoKeys/cosign_key

purpose: ASYMMETRIC_SIGN

versionTemplate:

algorithm: EC_SIGN_P256_SHA256

protectionLevel: SOFTWARE

module "kms" {

source = "terraform-google-modules/kms/google"

version = "~> 2.2"

project_id = "project123"

location = "us"

keyring = "test_keyring"

keys = ["cosign_key"]

purpose = "ASYMMETRIC_SIGN"

key_algorithm = "EC_SIGN_P256_SHA256"

set_owners_for = ["cosign_key"]

owners = [

"group:one@example.com",

]

}

Now that you've assembled all the pieces, combining cosign signatures with Kyverno signature verification, you can be sure that unsigned and unapproved images will be prevented from being deployed onto your clusters!

See Virtru In Action

Sign Up for the Virtru Newsletter

Dive Deeper

How to Send Encrypted Attachments in Outlook: A Complete Guide for 2026

Mergers and Acquisitions Security: How to Protect What Matters Most

/blog%20-%20Virtru%20Collaborate%20FinServ/collab-finserv.webp)

Take Control of Your Financial Data with Virtru’s Secure Collaborative Workspace

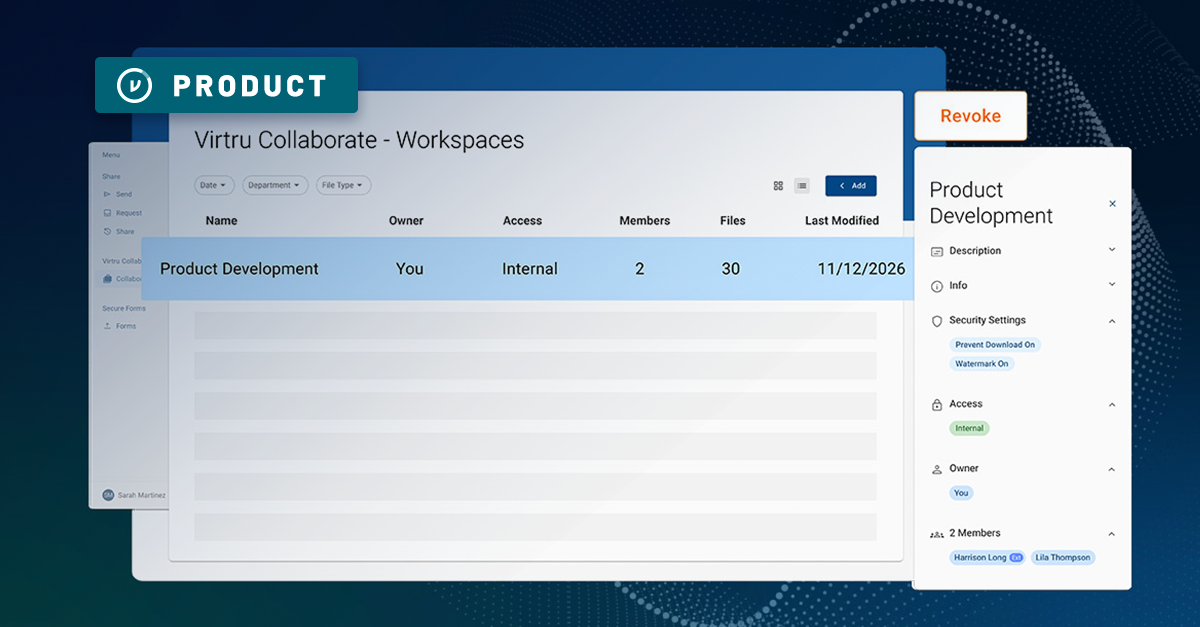

Introducing Virtru Collaborate: Create Secure, Governed Workspaces for External Sharing

Take Control of Your CUI with Virtru Collaborate for CMMC

Virtru Collaborate vs PreVeil Drive: Choosing the Right File Enclave for CUI Workflows

/blog%20-%20cmmc%20may%202026%20faq/may2026faq.webp)

What the May 2026 CMMC FAQ Means for Contractors Handling CUI

ITAR Compliant File Sharing: The Encryption Carve-Out Explained

/blog%20-%20enclave%20provider%20closing%20doors/enclave-closing-doors.webp)

When Your CMMC Enclave Provider Closes Its Doors: Why Ownership Matters More Than Ever

Three Strikes, You're Out: MOVEit's Latest Critical Flaw and What Comes Next

/blog%20-%20microsoft%20legal%20AI/miscrosoftlegal%20copy.webp)

Why Microsoft's New Legal Agent Needs Data-Centric Security to Deliver on Its Promise

Book a Demo

Become a Partner

Contact us to learn more about our partnership opportunities.

Become a Compliance Champion

Contact us to learn more about our partnership opportunities.