As Eric Hughes wrote 30 years ago in his famous cypherpunk manifesto, "Privacy is the power to selectively reveal oneself to the world."

Over the course of my career as a software marketer, I've invested an enormous amount of time and money into search engine optimization (SEO) with the explicit goal of revealing my organization's content to search engines like Google. The motivation was simple: selectively reveal more content, rank higher in search results, connect contextually with more customers, and drive more business. A huge part of the effort centered on leveraging meta tags, title tags, heading tags, etc to apply structure to information that was otherwise unstructured. By properly structuring content, it was possible to train the search algorithms; e.g. "pay attention to this content, but ignore that".

But now, with the advent of artificial intelligence and large language models (LLMs), we are seeing a major irony unfolding. One one hand, businesses still want to selectively "reveal certain information" to search engines for purposes of discovery; but on the other hand, these same businesses want to selectively "conceal certain information" from LLMs in the name of data privacy and IP protection.

The concern of course is that if sensitive business data is scraped into the copious training data used by LLMs, it could be exposed in unpredictable ways. So companies today are investing in solutions to help them discover, classify and tag their entire data estate so they can then apply and enforce granular policies to selectively control how data is revealed, or not.

In the past, SEO was about structuring and optimizing for findability. Today, data privacy efforts are about selectively preventing sensitive information from accidentally leaking into AI systems and LLM training data.

This dynamic represents a thick irony between the business mindset of the past versus the mindset of the future which must contend with all things AI. Further, it underscores the critical need to adopt data centric security and granular controls so you can selectively balance openness and transparency with privacy and security.

The SEO era was binary: put everything out there to be found.

The privacy era will be far more nuanced: discover, classify, and tag EVERYTHING so you can control at a granular level the data you expose, and the data you don't.

Matt Howard

A proven executive and entrepreneur with over 25 years experience developing high-growth software companies, Matt serves as Virtru’s CMO and leads all aspects of the company’s go-to-market motion within the data protection and Zero Trust security ecosystems.

View more posts by Matt HowardSee Virtru In Action

Sign Up for the Virtru Newsletter

Dive Deeper

Secure File Sharing for Law Firms: Persistent Control for M&A and Litigation

Secure Enclaves, Explained: 5 Pillars of Enclave Cybersecurity

/blog%20-%20gartner%20job%20listing/gartner-job-listing.webp)

Before Gartner Summit: This Fortune 500 Job Posting Reveals Data Security's Biggest Gap

How to Send Encrypted Attachments in Outlook: A Complete Guide for 2026

Mergers and Acquisitions Security: How to Protect What Matters Most

/blog%20-%20Virtru%20Collaborate%20FinServ/collab-finserv.webp)

Take Control of Your Financial Data with Virtru’s Secure Collaborative Workspace

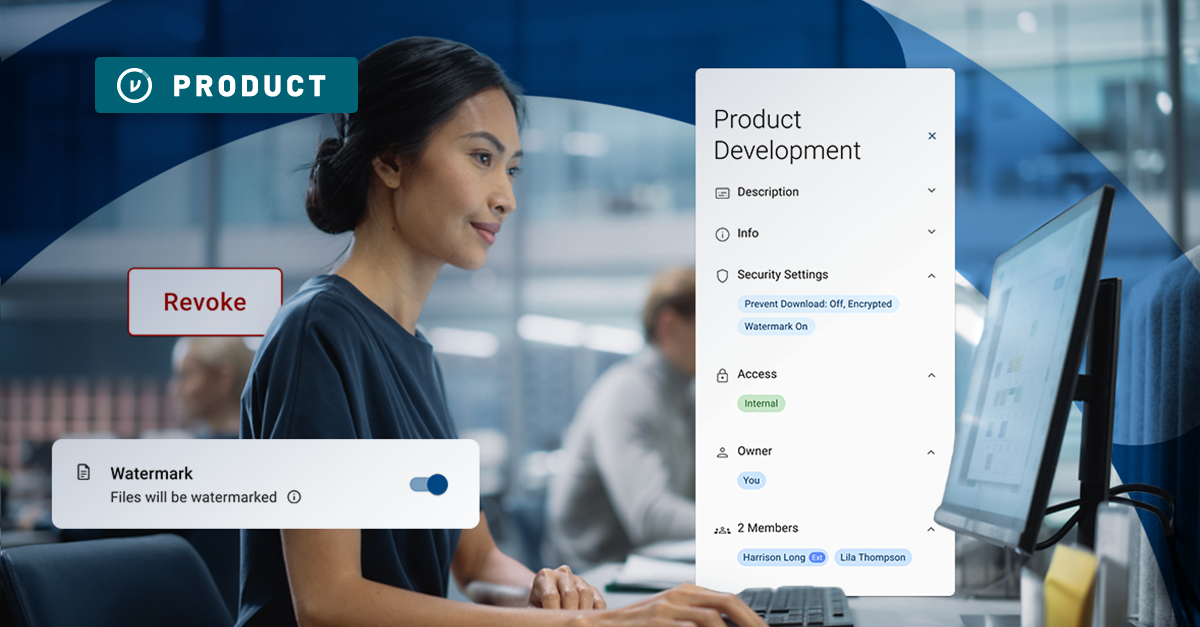

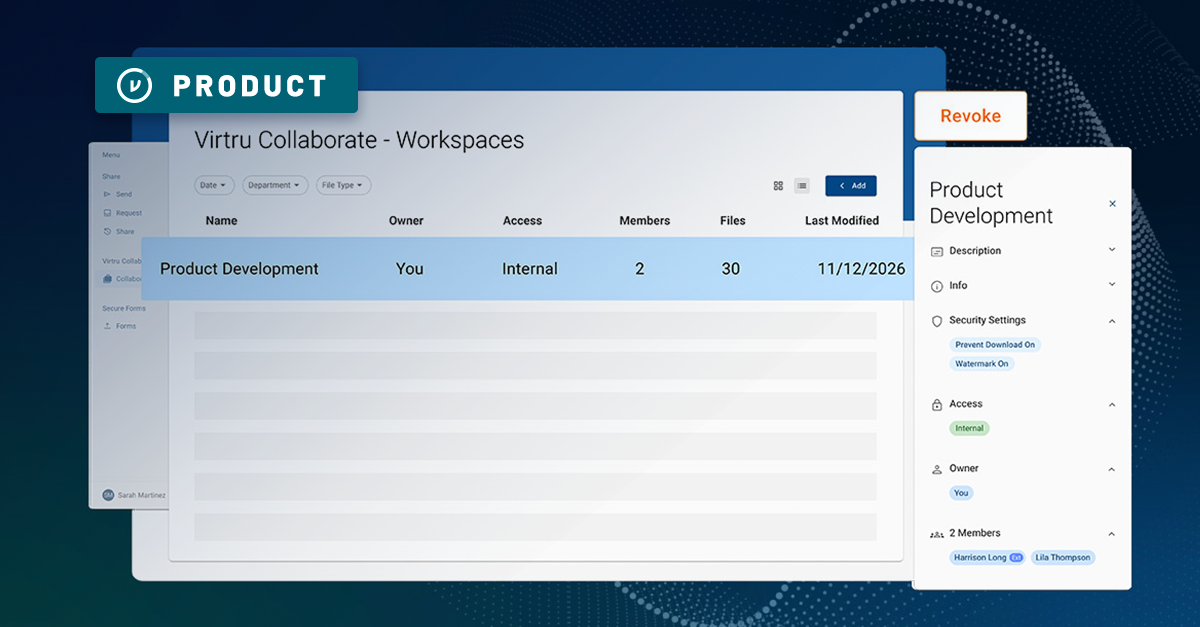

Introducing Virtru Collaborate: Create Secure, Governed Workspaces for External Sharing

Take Control of Your CUI with Virtru Collaborate for CMMC

Virtru Collaborate vs PreVeil Drive: Choosing the Right File Enclave for CUI Workflows

/blog%20-%20cmmc%20may%202026%20faq/may2026faq.webp)

What the May 2026 CMMC FAQ Means for Contractors Handling CUI

ITAR Compliant File Sharing: The Encryption Carve-Out Explained

Book a Demo

Become a Partner

Contact us to learn more about our partnership opportunities.

Become a Compliance Champion

Contact us to learn more about our partnership opportunities.