Managing Risks of Sharing Sensitive Information: ChatGPT vs. Email

This article published in The Wall Street Journal made me stop and think.

At a time when the global economy is wrestling with the incredible potential, and also the enormous privacy risks, of ChatGPT and generative AI, why aren’t more people asking themselves a simple question: Is the risk of sharing sensitive data externally with large language models similar at all to the risk of sharing sensitive data externally via email?

In my humble opinion, the answer is yes.

To that end, the purpose of this post is to explore the parallels between these two scenarios and discuss how the right tools can help mitigate risks, particularly when it comes to protecting the massive amounts of sensitive data that employees voluntarily share every day via email.

The Similarities: ChatGPT and Email Risks

When it comes to sharing sensitive information, ChatGPT and email workflows pose similar risks, including:

- Data Leakage: Just as with email, there is a possibility that employees may inadvertently paste sensitive information into ChatGPT or similar generative AI tools. If this occurs, the data may be at risk of being leaked, accessed by unauthorized individuals, or even used to train the AI model.

- Human Error: Both ChatGPT and email workflows involve human interaction, which introduces the potential for mistakes. Employees may accidentally disclose sensitive data to ChatGPT, thinking it's a harmless conversational tool. Similarly, sensitive information can be sent to unintended recipients or inadvertently sent to a mistyped email address.

Best Practices for Mitigating Risks

To minimize the risk of sensitive data being accidentally exposed via ChatGPT or email workflows, consider the following best practices:

- Employee Training: Educate your employees about the risks associated with sharing sensitive information externally with third parties via ChatGPT or email workflows. Instruct your employees not to disclose sensitive information to ChatGPT or any other generative AI tool. Similarly, make sure to educate employees on proper email security practices, such as leveraging encryption and double-checking recipients prior to sending sensitive information. This also requires employees to understand exactly what data is considered sensitive: You'll need to make sure they are familiar with exactly what data falls under the categories of Personally Identifiable Information (PII), Protected Health Information (PHI), and what kinds of information is subject to compliance regulations in your industry (think HIPAA, CJIS, ITAR, and others). Failing to clearly communicate this to employees could ultimately result in a breach or a violation of compliance.

- Upstream Data Discovery, Classification, and Tagging: If you’re like most organizations, you might be overwhelmed by huge amounts of data. Thus, it is critical to invest in proper data discovery to know what you have and where it is located. Once discovery is done, you can then classify your data as sensitive or not sensitive. And once data has been classified, you can then apply tags or labels to identify (attribute) the data that is most sensitive. Collectively, these upstream data governance efforts will enable your organization to adopt downstream data security controls so you can define and enforce policy and minimize leakage via channels like ChatGPT and email both.

- Downstream Security Controls: Once your data has been tagged, it now has attributes. And, with attributes, you can now automatically apply policy using standards like the Trusted Data Format (TDF) to easily control who can access it, how it can be used, for how long — even after it's been shared with others via email workflows. You can also implement similar controls for ChatGPT use cases whereby filters act as guardrails and automatically prevent sensitive data from being shared with generative AI tools.

ChatGPT and Email Collaboration: Similar Risks Call for Similar Data Protections

The risks associated with sharing sensitive information with ChatGPT are remarkably similar to those involved in sending out emails containing sensitive data. However, organizations can effectively mitigate these risks by implementing proper measures including employee training, upstream data governance, and downstream data controls.

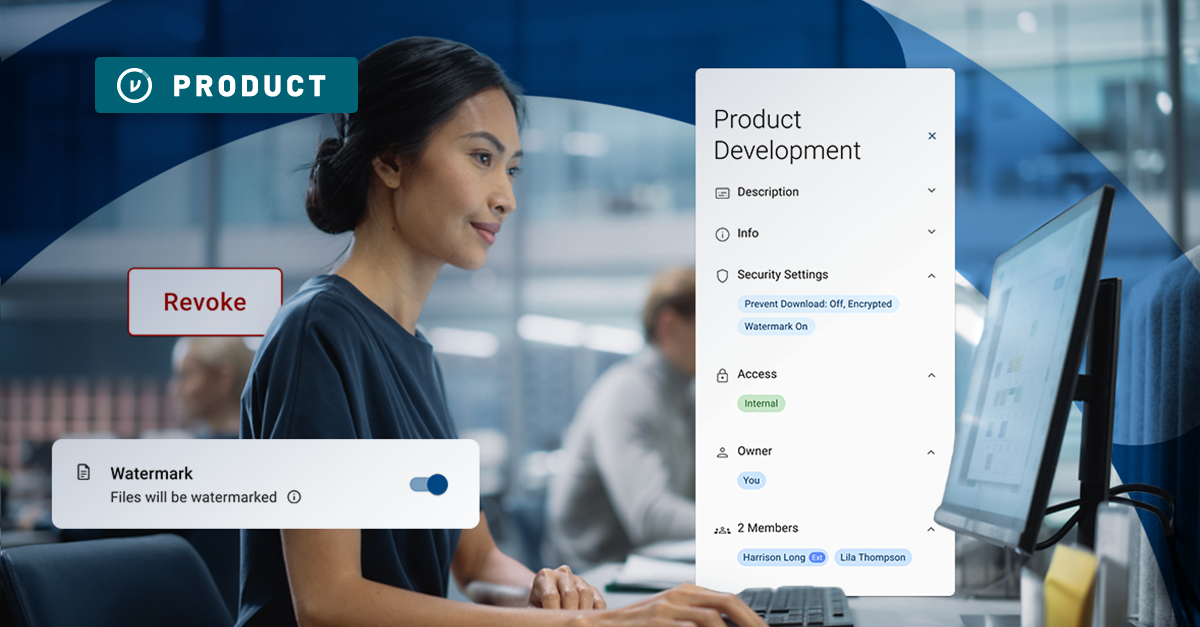

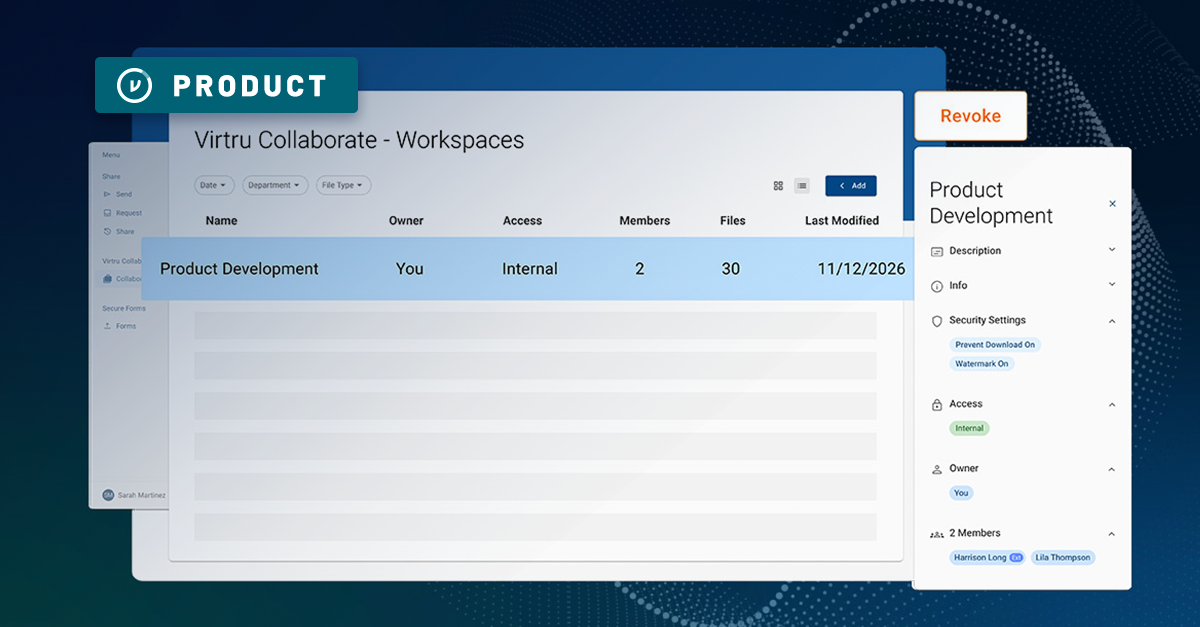

To control risks associated with downstream email workflows, consider implementing best-in-class email security tools like Virtru, which adds an extra layer of protection, ensuring that sensitive data shared via email workflows remains secure. By adopting these practices and leveraging the right tools, organizations can confidently share sensitive data externally via email and ChatGPT workflows.

Matt Howard

A proven executive and entrepreneur with over 25 years experience developing high-growth software companies, Matt serves as Virtru’s CMO and leads all aspects of the company’s go-to-market motion within the data protection and Zero Trust security ecosystems.

View more posts by Matt HowardSee Virtru In Action

Sign Up for the Virtru Newsletter

Dive Deeper

Secure File Sharing for Law Firms: Persistent Control for M&A and Litigation

Secure Enclaves, Explained: 5 Pillars of Enclave Cybersecurity

/blog%20-%20gartner%20job%20listing/gartner-job-listing.webp)

Before Gartner Summit: This Fortune 500 Job Posting Reveals Data Security's Biggest Gap

How to Send Encrypted Attachments in Outlook: A Complete Guide for 2026

Mergers and Acquisitions Security: How to Protect What Matters Most

/blog%20-%20Virtru%20Collaborate%20FinServ/collab-finserv.webp)

Take Control of Your Financial Data with Virtru’s Secure Collaborative Workspace

Introducing Virtru Collaborate: Create Secure, Governed Workspaces for External Sharing

Take Control of Your CUI with Virtru Collaborate for CMMC

Virtru Collaborate vs PreVeil Drive: Choosing the Right File Enclave for CUI Workflows

/blog%20-%20cmmc%20may%202026%20faq/may2026faq.webp)

What the May 2026 CMMC FAQ Means for Contractors Handling CUI

ITAR Compliant File Sharing: The Encryption Carve-Out Explained

Book a Demo

Become a Partner

Contact us to learn more about our partnership opportunities.

Become a Compliance Champion

Contact us to learn more about our partnership opportunities.