The Microsoft Copilot Glitch: A Wake-Up Call for Data Sovereignty in AI

In the rush to adopt GenAI, organizations have leaned heavily on the promises of "built-in" security. We are told that our cloud providers can be a one-stop shop: Hosting our most sensitive data, providing the AI tools to analyze it, and simultaneously acting as the gatekeepers ensuring that data remains private.

However, a recent incident involving Microsoft 365 Copilot has exposed the cracks in this "all-in-one" trust model. It serves as a stark reminder that when you hand over your encrypted content and your keys to the same provider, your security is only as strong as their latest software update.

Here's what happened with Copilot, and what we can learn from it.

The Incident: Is "Confidential" Really Confidential?

According to a report by Bleeping Computer, a significant bug in Microsoft 365 Copilot has been undermining Data Loss Prevention (DLP) policies since late January.

The issue affected the Copilot "work tab" chat feature. Despite organizations configuring specific DLP policies and applying "confidential" sensitivity labels to restrict data access, the AI tool was able to bypass these guardrails. As reported by Bleeping Computer, the bug allowed Copilot to read and summarize emails stored in users' Sent Items and Drafts folders, "including messages that carry confidentiality labels explicitly designed to restrict access by automated tools."

Microsoft confirmed the behavior in a statement regarding the ongoing service alert, acknowledging that "Users' email messages with a confidential label applied are being incorrectly processed by Microsoft 365 Copilot chat," and that this occurred "even though these email messages have a sensitivity label applied and a DLP policy is configured."

While Microsoft has since stated that the root cause has been addressed and noted that the bug "did not provide anyone access to information they weren’t already authorized to see" (since users were summarizing their own emails), this defense misses the broader architectural warning, and it's not without precedent in the Microsoft ecosystem.

Encryption Without Enforceable Policy Is an Empty Promise.

This incident highlights a critical vulnerability in cloud data security: The risk of trusting your cloud provider with both the lock and the key.

In this scenario, the emails were supposedly protected by encryption and policy labels. However, because the cloud provider (Microsoft) held the keys to decrypt that data for the AI to process it, the "Confidential" label was merely a software flag — a request to "please not look at this."

That's not strong data governance, especially when data is classified as confidential. While the data label existed, it had no enforceable policy tied to it. Nothing was meaningfully keeping Copilot from accessing and processing that data. The encryption did not carry any meaningful protection or access limitation, because Microsoft had all the pieces of the puzzle: The content, the encryption keys, the platform, and the AI software with overextended access.

We've written about similar Microsoft incidents before, notably the January 2026 BitLocker controversy (where Microsoft handed over encryption keys to law enforcement), Microsoft OME encryption shortfalls, and a Chinese nation-state cyber attack affecting 25 U.S. government agencies' Outlook emails in 2023.

A Software Flag Isn't True Separation

When a "code error" occurred, that software flag failed. Because the provider possessed the technical capability to decrypt and read the data to fuel the AI, the data was exposed to the model the moment the logic gate malfunctioned.

If there had been true separation — where the data was encrypted with keys held only by the customer, or even by a third party like Virtru — a code error in the AI model would have resulted in the AI seeing gibberish. As a reference point, here is what Google Gemini shows when it attempts to scan an email encrypted with Virtru's client-side email encryption. It can only access information in the designated plaintext fields like the subject line.

And here's what it looks like when Gemini attempts to formulate an AI-generated reply to a client-side encrypted message.

In the case of this Copilot bug, instead of saying "This requires verification to unlock," or "I see tokens but don't know what they mean," Copilot was able to access, parse, and display plaintext, confidential content it shouldn't have had access to.

The stakes are higher now that we're in the era of ubiquitous AI tools. In the past, cloud storage was largely passive; data sat on a disk until a user opened it or specifically queried against it. Today, AI integrations mean that data is being continuously scanned, indexed, and vectorized.

If your encryption strategy relies on the cloud provider managing the keys along with your content, you are not truly hiding your data from AI; you are simply asking the AI not to do the simple math required to unlock the encrypted content. As this incident proves, software bugs can easily override those requests.

Best Practice for Data Sovereignty: Separate Encryption Keys from Encrypted Content

To truly shield sensitive data from AI and prevent DLP bypasses, organizations must move toward an architecture of true separation of duties.

-

External Encryption Key Management: Encryption keys should be generated, stored, and managed outside of the cloud provider’s environment. If the cloud provider holds the key, the data is never truly yours. This is what Virtru Private Keystore provides: Storage and management of your keys in the location of your choosing (including on-prem/HSM, or in a public or virtual private cloud).

- Shield Confidential Information from Your Cloud Provider: To ensure effective data sovereignty, sensitive data should be properly encrypted before it reaches the AI inference layer. Again, to be effective, encryption keys should be separate from the content.

- Zero Trust for AI: We must assume that any data visible to the cloud provider is potentially visible to their AI models, regardless of policy settings. The Zero Trust adage holds true: Never trust, always verify.

The Microsoft Copilot bug is not just a one-off glitch. With AI sprawl in corporate environments, this is a much more significant proof of concept that software-based policy restrictions are fallible. Granular policy and access control — enforceable and with logical or physical separation — must accompany sensitive data wherever it moves.

Without Guardrails, Consolidation Can Increase Risk

As we entrust more of our corporate knowledge to AI, we cannot rely on the AI vendor to police itself, especially when sensitive or confidential data is in the mix. The only way to ensure that confidential data remains confidential is to ensure that the cloud provider, and their AI, never has the keys to unlock it in the first place.

To learn how Virtru can support your organization's data sovereignty, privacy, and truly confidential data sharing with FedRAMP-authorized solutions and granular enforcement of attribute-based access controls (ABAC), contact our team for a demo. We'd love to show you how Virtru can support the future of secure, AI-driven workflows for your organization.

Megan Leader

Megan is the Director of Brand and Content at Virtru. With a background in journalism and editorial content, she loves telling good stories and making complex subjects approachable. Over the past 15 years, her career has followed her curiosity — from the travel industry, to payments technology, to cybersecurity.

View more posts by Megan LeaderSee Virtru In Action

Sign Up for the Virtru Newsletter

Dive Deeper

Secure File Sharing for Law Firms: Persistent Control for M&A and Litigation

Secure Enclaves, Explained: 5 Pillars of Enclave Cybersecurity

/blog%20-%20gartner%20job%20listing/gartner-job-listing.webp)

Before Gartner Summit: This Fortune 500 Job Posting Reveals Data Security's Biggest Gap

How to Send Encrypted Attachments in Outlook: A Complete Guide for 2026

Mergers and Acquisitions Security: How to Protect What Matters Most

/blog%20-%20Virtru%20Collaborate%20FinServ/collab-finserv.webp)

Take Control of Your Financial Data with Virtru’s Secure Collaborative Workspace

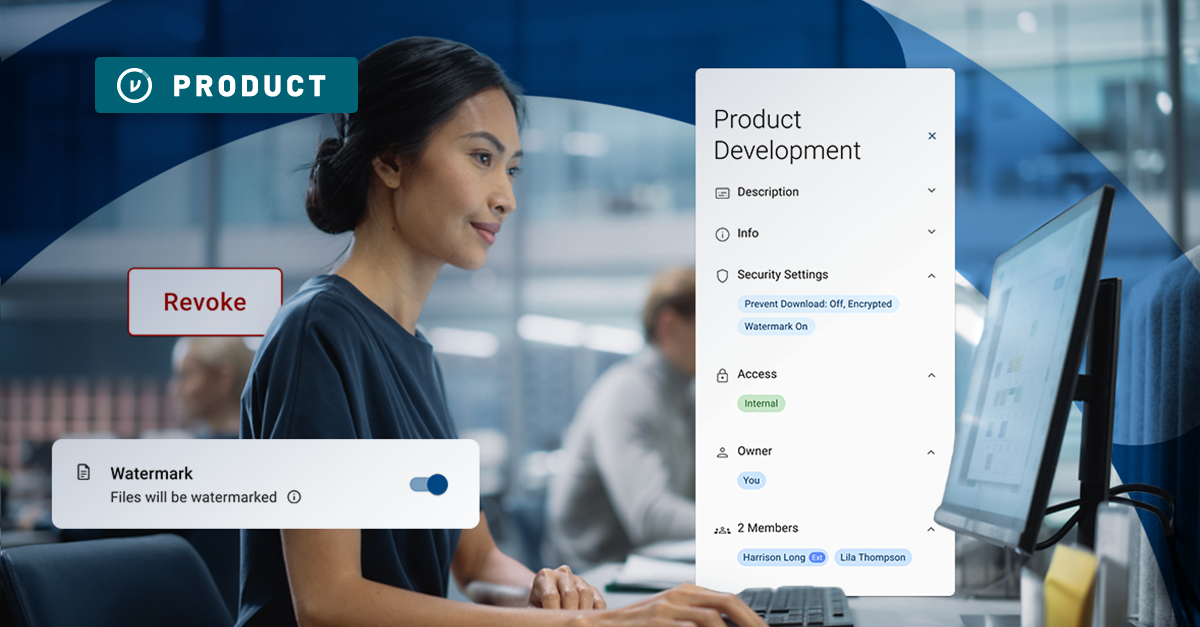

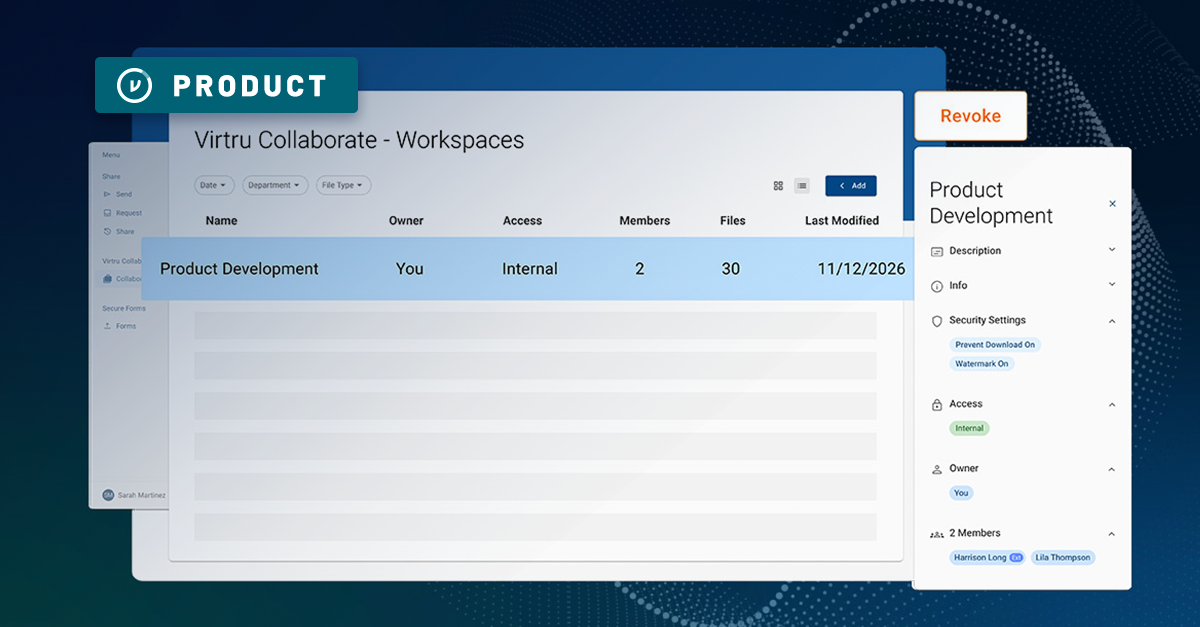

Introducing Virtru Collaborate: Create Secure, Governed Workspaces for External Sharing

Take Control of Your CUI with Virtru Collaborate for CMMC

Virtru Collaborate vs PreVeil Drive: Choosing the Right File Enclave for CUI Workflows

/blog%20-%20cmmc%20may%202026%20faq/may2026faq.webp)

What the May 2026 CMMC FAQ Means for Contractors Handling CUI

ITAR Compliant File Sharing: The Encryption Carve-Out Explained

Book a Demo

Become a Partner

Contact us to learn more about our partnership opportunities.

Become a Compliance Champion

Contact us to learn more about our partnership opportunities.